There are mandatory

> network card performance settings for Windows and

> network card performance settings for Linux

which influence the performance of the system and the stability of the data transfer over the network card.

This section will describe which settings should be optimized for maximum performance and minimal CPU usage. But it shows also which features can have massive impact in the stability of the data transfer.

Wrong settings can result in a huge amount of lost packets that even whole images are discarded.

The variance of NIC performance settings depends on the used operating system and is described in detail in the following sections.

Receive Buffers

Sets the number of buffers used by the driver when copying data to the protocol memory.

Increasing this value can enhance the receive performance, but also consumes system memory.

Receive Descriptors are data segments that enable the adapter to allocate received packets to memory.

Each received packet requires one Receive Descriptor, and each descriptor uses 2KB of memory.

Flow Control

Enables adapters to generate or respond to flow control frames, which help regulate network traffic.

Interrupt Moderation Rate

Sets the Interrupt Throttle Rate (ITR), the rate at which the controller moderates interrupts.

The default setting is optimized for common configurations. Changing this setting can improve network performance on certain network and system configurations.

When an event occurs, the adapter generates an interrupt which allows the driver to handle the packet.

At greater link speeds, more interrupts are created, and CPU rates also increase. This results in poor system performance.

When you use a higher ITR setting, the interrupt rate is lower, and the result is better system performance.

NOTE: A higher ITR rate also means the driver has more latency in handling packets. If the adapter is handling many small packets, lower the ITR so the driver is more responsive to incoming and outgoing packets.

Jumbo Frames / Jumbo Packets

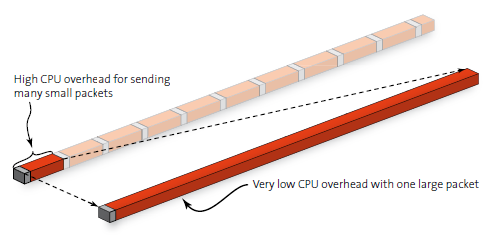

Enables or disables Jumbo Frame capability.

If large packets make up the majority of traffic and more latency can be tolerated, Jumbo Frames can reduce CPU utilization and improve wire efficiency.

Jumbo frames have a strong impact on the performance of the GigE Vision system.

Jumbo frames are packages with more than the Ethernet MTU (Maximum Transmission Unit) of 1500 bytes.

Less header data is transmitted, which allows more capacity to be available for user data.

Usage Considerations

Enable Jumbo Frames only if devices across the network support them and are configured to use the same frame size.

When setting up Jumbo Frames all involved network devices, be aware that different network devices calculate Jumbo Frame sizes differently.

Some devices include the header information in the frame size while others do not. Intel adapters do not include header information in the frame size.

Jumbo Frames only support TCP/IP.

Using Jumbo frames at 10 or 100 Mb/s can result in poor performance or loss of link.

When configuring Jumbo Frames on a switch, set the frame size 4 bytes higher for CRC, plus four bytes if using VLANs or QoS packet tagging.

Jumbo Frames can cause lost packets or frames when activated in the network card and the camera.

But this is normally a side effect caused by the other settings mentioned before, which is only visible with Jumbo Frames.

This means that, if you have trouble when using Jumbo Frames, try to find the cause in the previously mentioned settings instead of deactivating the Jumbo Frames.

Interrupt Throtteling (10 GigE only)

This parameter should be changed only if you observe performance problems with 10 GigE devices.

Windows throttling mechanism

Because multimedia programs require more resources, the Windows networking stack implements a throttling mechanism to restrict the processing of non-multimedia network traffic to 10 packets per millisecond.